Historical processes of individualization and impact on shared private spheres and the collective essence of information.

Fabien LechevalierIn this blog post Fabien Lechevalier explores the intricate historical and contemporary dimensions of privacy.

Protesters take part in a demonstration in December about the Paris 2024 Olympic and Paralympic Games and the use of surveillance cameras. Photograph: Geoffroy van der Hasselt/AFP/Getty Images

The exploration of historical processes in privacy individualization reveals privacy as a complex, evolving concept, often narrowly understood by the law. Initially framed as an individual private sphere, the concept emphasized secrecy and isolation, epitomized by the private sphere/public sphere dichotomy. Sociologically, intimacy first manifested in shared spheres before transitioning to individual ones. Only in the mid-twentieth century did the notion of an individual private sphere gain prominence. However, the legal focus on protecting private information based on an individualist conception has overshadowed the study of shared private spheres persisting today.

In the digital era, recent developments in information technology both emphasize and transform the observation of shared private spheres. Despite the individualization process, shared private spheres endure due to the collective nature of information. Individuals exist within networks—families, friends, and online groups. Moreover, information processing technologies, particularly Big Data and algorithms, introduce a new shared private sphere by collectively exploiting individual data. This exploitation capitalizes on network effects, enriching data mutually and producing new information very useful for the private sector, public sector and the common interest. This collective function of information, accentuated by algorithmic processing, holds potential for social and economic innovation, fostering initiatives in various sectors (health, justice and of course smart cities).

Furthermore, thinking of privacy as a collective or relational good induces that privacy is contextual. Nissenbaum's Contextual Integrity (CI) framework conceptualizes information privacy as context-specific social norms, varying across settings and relations. CI identifies five parameters—data subjects, senders, recipients, types, and transmission principles—to model context-relative informational norms.

While CI offers a precise framework for understanding information privacy, it faces the challenge of determining actual, prevailing contextual norms. Policymakers and engineers, seeking to protect and respect privacy, must comprehend existing social expectations regarding information flow. Empirical research becomes crucial for identifying social expectations, exploring privacy norms in various contexts like healthcare, education, and the home. Existing studies employ interviews and surveys to infer social norms from individual opinions, preferences, and attitudes. However, this approach has limitations, as it doesn't provide a direct answer to the question of actual social expectations. Researchers need to move beyond individual perspectives and consider collective aspects to develop a more comprehensive understanding of social expectations regarding information flow in specific contexts.

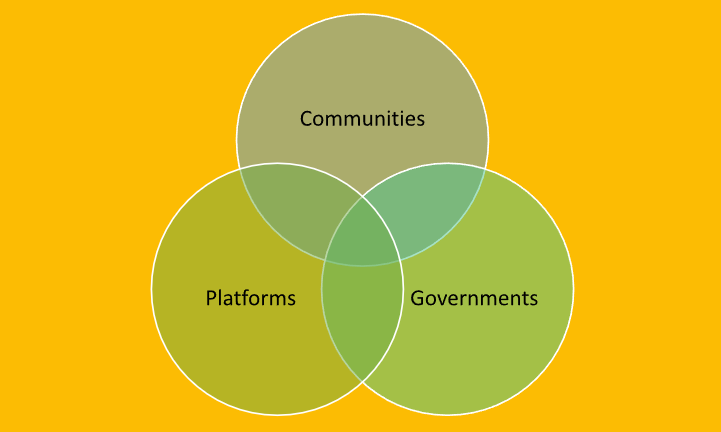

This project is inspired by Daniel Susser & Matteo Bonotti’s work, on OECD's deliberative mini-publics for studying contextual integrity norms, by incorporating participatory design and co-design principles (legal design literature). Actively engaging participants in all phases, participatory design transforms them from passive subjects to active contributors in a collaborative, inclusive deliberative process. The sought consensus is collectively determined, reflecting diverse perspectives. The participatory design dimension ensures participants are active co-creators, contributing to a collectively determined exploration of contextual integrity norms. Beyond a methodology augmented with research literature on design studies, this work proposes as a case study AI powered video surveillance during the Paris 2024 Olympic Games. It addresses concerns about algorithmic video surveillance cameras and the privacy implications of entrusting machines to autonomously identify suspicious behaviors. Indeed in April, Parliament passed a law related to the Olympic Games, allowing the use of algorithmic camera. Questions about how these systems discern threats and the broader issues surrounding individual freedoms are highlighted. Noémie Levain, responsible for legal and political analysis at La Quadrature du Net, notes, "Behind this tool lies a political vision of public space, a desire to control its activities." She emphasizes that any surveillance tool becomes a potential source of control and repression for both law enforcement and the state.

This project comprises three key stages to allow comprehensive understanding of social expectations regarding information flow in this specific context:

3.1 Stage 1 (Recruitment): Participants, mirroring the general public, form forums of approximately 20 Paris inhabitants. Selected through stratified random sampling, this ensures a diverse and inclusive group, reflecting various demographics such as age, gender, location in Paris, cultural background, employment types, and household environment. The recruitment process is vital for fostering a deliberative environment where a variety of views are represented.

3.2 Stage 2 (Experts and Information): Participants gain access to a broad range of expertise. Experts include academic professionals (philosophers, ethicists, political scientists, and lawyers), city (and government) representatives (legislators and civil servants with policy-making experience), and industry representatives (privacy/data protection officers or ethics professionals assisting tech firms in navigating privacy laws).

3.3 Stage 3 (Deliberation and Facilitation): Following expert briefings, participants engage in a deliberative exchange. The goal is to reach consensus (defined as agreement at the 80% level or more) on existing social norms about privacy in the specific context of algorithmic video surveillance. The deliberation process involves assessing disruptions caused by this technology and determining necessary interventions or regulations. Expert facilitators guide sessions, encouraging active listening, empathetic engagement, and critical thinking. Small-group work enables in-depth and inclusive deliberation, and participants are encouraged to reflect between sessions. The project concludes with a compiled report of recommendations collaboratively agreed upon during the deliberative process.

.png)

.jpg)